Abstract

AI agents are getting better fast, and they are already useful in production. But most enterprise value will not come from “agent orchestration” layered onto today’s workflows. The step change comes from task displacement and task-native orchestration: breaking work into modular tasks, routing those tasks to the right execution layer (AI, software, humans), verifying quality, and measuring unit economics at the task level.

This paper makes the case for a task-native operating model, explains why AI alone will not transform regulated and exception-heavy industries anytime soon, and uses insurance distribution as the clearest stress test.

The Moment We Are In: Capital, Narrative, and the Wrong Abstraction

We are living through an unprecedented investment cycle in foundation models. The narrative is moving faster than enterprise reality can move. That mismatch is the trap.

Most industries do not fail at transforming because they lack intelligence. They fail because work is not designed to be measurable, routable, and improvable. Decision rights are unclear. Systems are fragmented. Data is incomplete. Exceptions dominate the long tail. People and process change moves slower than model capability.

This is why so many AI deployments create excitement, then flatten into incremental gains.

Economists have been here before. Erik Brynjolfsson has argued that “awesome technology alone is not enough,” and that real productivity requires business process updates, reskilling, and sometimes major organizational changes. McKinsey frames this as a productivity “J-curve,” where you can see initial drag before larger benefits appear once workflows and org structures catch up.

The implication is uncomfortable but useful:

Intelligence is not the bottleneck. Operational design is.

The Core Thesis: Agents Are an Execution Layer; Tasks Are the Unit of Transformation

The agent story is directionally right and economically incomplete.

The right unit of analysis is not “the agent.” It is “the task.”

This is not a semantic distinction. It is the difference between incremental productivity and structural margin change.

A job is a bundle of tasks. Automation substitutes for some tasks and complements others. Brookings summarizes this clearly: automation tends to affect tasks within occupations rather than eliminating entire occupations.

So the winning question is not:

“Can an agent do this role?”

It is:

- “What are the tasks inside this workflow?”

- “Which tasks can be displaced, simplified, or eliminated?”

- “Which tasks require licensed authority or human judgment?”

- “How do we route work to the cheapest layer that still meets quality and compliance?”

- “How do you guide / equip the lower level knowledge worker to act as a higher level knowledge worker?”

When you adopt a task-first lens, “AI agents” become one instrument in a broader system that also includes software automations, human specialists, playbooks, and quality control.

Why AI Alone Will Not Transform Regulated, Exception-Heavy Industries Soon

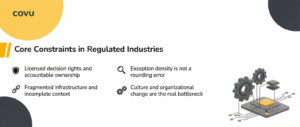

This is where the agent hype breaks on reality. In regulated industries, the core constraints are not purely technical, and many will not disappear on the model’s timeline.

Licensed decision rights and accountable ownership

A large share of meaningful actions in insurance require licensed authority or clear accountability. Even when AI can perform an action, governance still demands ownership, auditability, escalation paths, and liability controls.

Fragmented infrastructure and incomplete context

Agents do not automatically unify legacy systems, guarantee data provenance, or create integrations. Without dependable context, “acting” becomes guessing. In regulated environments, guessing becomes a cost.

Exception density is not a rounding error

In many regulated workflows, the long tail is the business. Exceptions drive rework, cycle time, and customer dissatisfaction. Systems must detect uncertainty and escalate, rather than pretending autonomy is universal.

Culture and organizational change are the real bottleneck

BCG’s work on insurers scaling AI is blunt: “70% of the problems in scaling AI have to do with people, organizational issues, and processes,” and it notes a cultural mismatch between actuarial precision and probabilistic AI outcomes.

This reinforces the operational thesis: If you do not change how work gets done, agents will produce localized wins and systemic disappointment.

Insurance as a Stress Test

Insurance distribution is “hard mode” for AI:

- Regulated and licensed

- Document-heavy

- Service-heavy

- Exception-dense

- Fragmented across portals, PDFs, inboxes, and legacy systems

This makes it the cleanest proving ground for the task-native model.

It also explains why “agent-first” tends to underdeliver. The agent can draft an email, summarize a policy, or fill a form, but the real cost and risk live in the end-to-end process: intake, missing context, tool failures, compliance steps, and exceptions.

The primary economic story is not “an agent that can do more.” It is “a system that changes the cost curve of servicing and operations.”

The Prerequisite: Reinvent Workflows and Build the Connectivity Layer

Task displacement is not a slogan. It only works when work becomes executable by design.

The baseline requirement is workflow reinvention: decomposing work into discrete tasks with clear inputs and outputs, defined decision rights, embedded playbooks, and verification built into the flow. Without this, AI remains an assistant bolted onto ambiguity.

But workflow structure alone is not enough in insurance. Most execution breaks because the world is not connected.

Risk lives on balance sheets. Pricing lives in underwriting intent and carrier appetite. Customer needs live in a messy and changing real-world context. Distribution sits in the middle, translating risk economics into customer action, but the translation is constrained by fragmented systems and missing data.

AI cannot solve this by reasoning harder over incomplete inputs. The prerequisite is a connectivity and data layer that can pull signal from systems of record, normalize it, and make it usable in execution.

That includes connecting policy and account context, carrier rules and constraints, pricing signals, and operational history, then translating those signals into task guidance, recommended actions, and auditable execution.

This is also where the shift from efficiency to effectiveness begins. Lower cost per task creates capacity. Connectivity plus guidance turns that capacity into better outcomes: higher retention and expansion, fewer coverage gaps, faster resolution, and more accessible advice at scale.

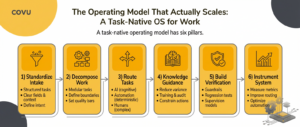

The Operating Model That Actually Scales: A Task-Native OS for Work

A task-native operating model has six pillars.

1) Standardize intake

Turn messy inbound work into structured tasks with clear fields, context, and intent.

2) Decompose work into modular tasks

Define task boundaries, inputs, outputs, and quality bars. Smaller tasks are easier to route, test, and improve.

3) Route tasks across execution layers

Route each task to the lowest-cost layer that can meet the required quality and compliance:

- AI for bounded, repeatable cognitive tasks

- automation tools for deterministic steps

- humans for authority-bound actions, complex judgment, and edge cases

- specialized teams for throughput where appropriate

4) Knowledge work guidance

Most regulated workflows are not “hard” because the steps are complex. They are hard because the right decision depends on context, carrier rules, past precedent, underwriting logic, state-specific constraints, and institutional memory.

A scalable operating model therefore needs a guidance layer that sits inside execution, not as a wiki nobody reads. Guidance is how you reduce variance, training time, rework, and audit risk while raising the floor of execution across humans and AI.

This is also why the “agent” framing misses the point. The biggest leverage is not that an agent can act. The biggest leverage is that the system can teach and constrain action in a way that scales across humans and machines.

5) Build verification and quality control into the workflow

This is the difference between demos and production. Guardrails, regression tests, supervision models, audits, and escalation are not optional. Decagon’s “layered guardrails” and Sierra’s “supervisor” approach are both evidence that production systems require structured verification.

6) Instrument the system with task-level economics

Measure cost per task, error rate, rework, time to resolution, escalation rate, and customer outcomes. Use this to improve routing rules, guidance, playbooks, and automation coverage over time.

This is where compounding happens. The system gets cheaper and better as it learns.

McKinsey’s point about productivity J-curves matters here: real gains follow process and organizational redesign, not the initial technology drop-in.

The Honest Argument About AI Agents: Where They Shine, Where They Break

AI agents are improving rapidly. In many contexts, they are already useful in production. The question is not whether agents work. The question is where they create durable enterprise value.

Agents perform well in bounded environments where inputs are structured and outputs can be verified.

They are effective at:

- Intake triage and routing suggestions

- Document extraction and summarization

- Drafting and communication assistance

- Tool execution within tightly constrained workflows

- Decision support when paired with verification

In these contexts, agents reduce cognitive load and improve throughput. They are meaningful accelerators when embedded inside structured systems.

But agents struggle when the operating environment itself is ambiguous.

They break in:

- Long chains of actions with brittle dependencies

- High exception density environments without structured escalation

- Unclear decision rights and ownership boundaries

- Disconnected systems without robust context plumbing

- Compliance-heavy steps without enforceable procedural logic

- Data absence and data incompleteness

In these environments, the limiting factor is not model capability. It is operational design.

This is why the agentic future is real but uneven. Organizations that win will not be those that deploy agents indiscriminately. They will be those that redesign work into modular tasks, build governance into execution, and expand automation coverage deliberately.

The path forward is not immediate end-to-end autonomy. It is structured task displacement.

A Practical Blueprint: What a Modern Operating Stack Requires

If agents are an execution layer rather than the unit of transformation, then the structural question becomes unavoidable: what does a system designed around tasks actually require?

In regulated and exception-heavy industries, transformation does not begin with intelligence. It begins with architecture. Task displacement only becomes viable when execution itself is redesigned.

A modern operating model requires five foundational components.

First, a connectivity layer that can reliably pull and write context across fragmented systems. In insurance distribution, critical information is dispersed across carrier portals, AMS platforms, underwriting documentation, and internal records. Without consistent access to this context, even highly capable AI systems operate on incomplete inputs. In regulated environments, incomplete context introduces operational and compliance risk. Connectivity is therefore not an enhancement. It is a prerequisite.

Second, an AI execution layer capable of handling bounded cognitive work. This includes document extraction, structured drafting, tool interaction, and contextual decision support. The key constraint is boundedness. AI performs best when tasks are clearly defined and outputs can be verified. Autonomy must operate within structured limits.

Third, an orchestration layer that decomposes work into modular tasks, routes those tasks to the appropriate execution layer, governs decision rights, and measures performance at the task level. This represents the shift from managing workflows to governing execution. Without orchestration, automation remains fragmented and difficult to scale.

Fourth, a service engine layer that integrates human authority and embedded playbooks into the system. In regulated industries, certain decisions require licensed accountability and contextual judgment. Rather than viewing human involvement as a failure of automation, the operating model should treat it as a defined execution layer with clear escalation rules and structured guidance.

Fifth, a quality layer responsible for supervision, auditing, regression testing, and feedback integration. Production systems require verification. Without structured oversight and measurable feedback loops, automation increases variance rather than reducing it.

Together, these components form what can reasonably be described as an operating system for work. The emphasis is not on isolated intelligence, but on governed execution. The objective is not immediate end-to-end autonomy, but measurable, compounding improvement at the task level.

When workflows remain bundled and ambiguous, AI functions primarily as an assistant. When work is decomposed, decision rights are explicit, and performance is measured per unit of execution, intelligence becomes part of a system that improves over time.

That distinction determines whether AI remains incremental or becomes transformative.

From Blueprint to Build

The operating architecture described above is not intended as an abstract framework. It reflects a set of design principles that can be implemented in production environments, particularly in regulated and exception-heavy industries such as insurance distribution.

Over the past several years, we have been rebuilding the COVU technology stack around these principles: task-level decomposition, governed orchestration, bounded AI execution, structured connectivity, embedded human authority, and measurable unit economics.

In the coming weeks, we will publish a detailed overview of the COVU technology stack architecture. That release will describe how these layers are structured, integrated across fragmented infrastructure, and operated within real-world distribution workflows.

The objective is not to introduce another agent into the market. It is to operationalize the architectural model outlined in this paper.

The distinction between theory and transformation lies in implementation.

Conclusion

AI agents are real, and they will keep improving. But the decisive advantage will not come from agent demos layered onto legacy operations. It will come from reinventing workflows so AI can reliably execute inside them, and building the connectivity layer that turns risk and operational data into customer action.

Insurance is the stress test. It is licensed, exception-heavy, and infrastructure-fragmented. If task-native, workflow-first operations can scale here, the pattern generalizes.

The limiting factor is no longer model capability. It is the discipline to redesign execution.

Agent orchestration will remain visible. Workflow reinvention, connectivity, and task displacement will determine who actually transforms.